A collection of miscellaneous learnings and reflections around recent AI progress and associated curiosities. Arranged in no particular order: feel free to skip around to sections of interest. Will update the online version periodically with new learnings.

Dreaming for neural nets

Dreams evolved to assist generalisation — what if AIs could overcome the problem of “overfitting” during learning by learning how to dream?

1) Deep neural nets (DNNs) face the issue of “overfitting” as they learn (overfitting is when performance on 1 dataset increases but the network fails to generalise & correspondingly improve performance across other datasets)

2) Dreams are a biological mechanism for increasing generalizability across neural structures, & nightly dreams evolved to combat the brain’s overfitting during daily learning (dream loss can lead to an overfitted brain that can still memorize, but fails to generalise)

Conclusion: it’s possible that developing wake/sleep/dream optimisation in AIs could lead to improved performance in generalization and learning. (Inspiration)

Embodied AGI

Learnt that DeepMind trains simulated robots to play soccer, and then ports the simulated soccer-playing robots onto real robots who can walk / kick / score, and generally perform quite well!

In an earlier piece, I explored embodied cognition in humans across different environments (eg: dream states, VR) — but it’s very interesting to think about general embodied intelligence, and how synthetic intelligences could be deployed in bodies to interact with the world

AIs as decision-makers

Artificial intelligence is just the means — the ultimate end is agency.

Have now heard from several people that they are using AIs as decision-makers in their workflow — AIs seem to have graduated from being useful as powerful tools to scaffolding and executing their own work processes (see AutoGPT, GoalGPT).

Humans continue to move further away from the core of work, increasingly outsourcing physical and cognitive aspects of output-oriented activities: from executers to implementers to supervisors, to auditors & rubber-stampers.

Until the 1970s, we enhanced our bodies capabilities to work harder:

Packy writes: “The history of human progress up until the 1970s was largely about enhancing our bodies’ capabilities, using new power sources, methods, and materials to create more physical things more quickly and cheaply. This allowed humans to be more productive.

In the past 50 years, we’ve focused on enhancing our brain capabilities to work smarter:

“The past fifty years’ progress has primarily been about enhancing our brains’ capabilities, using new computers, algorithms, and modes of communication to create more digital things and connect over 4 billion people to the internet.”

In the next 50 years, we’ll outsource our brain capabilities to a form of collective intelligence so we can work… less?

Interfaces

ChatGPT is CLI, who is developing the GUI equivalent? What could a Generative User Interface look like?

Or is this a false equivalence — traditional command line interfaces (CLI) used syntax to execute programs, ChatGPT uses natural language (i.e. different from traditional CLIs in that it is user friendly, & performance relies on the creativity of prompts rather than technical aptitude of the user)

Reconstructive photography

In the strangest of generative AI uses — Samsung’s moon-mode camera feature apparently just substitutes a stock photo of the moon

The extreme version of this is connecting genAI to your camera, and have it generate whatever you want to take a picture of. If this becomes a thing, we’d live in a world where our virtual realities are also synthetic, guided by top-down machine heuristics rather than representations of physical reality.

If authenticity is a slider, generative AI allows virtual spaces to reach their most synthetic extreme.

Automation & the future of work

Tech should be built to complement, not substitute:

Over time, value of human labour has gone up and we’re paid more for the same unit of work because we can leverage ourselves with more technology.

Trying to substitute human function puts an upper bound on the possibilities of machine function

Tests designed to evaluate machine intelligence and effectiveness should focus on augmentation metrics rather than imitation metrics

[Extremely idealistically & probably unrealistically] The solution may not be to slow down tech (progress) but to speed up (human) adaptation

Valuing digital goods

How can you measure the value of digital goods, so that it is properly computed in GDP calculations in the future?

Maybe by asking consumers the question: “How much money would someone have to pay you to stop using [X] service?” as a proxy

An example of a digital consumer good is the metaverse — though it is pretty tiny today, eventually it will be quite big. Maybe half your waking hours are already interacting with virtual spaces — you will see that with time, these interfaces will only get richer and better

Though you may start to derive a lot of value from these digital goods, because you pay $0 to access them this value does not get captured in GDP

So a big project for the 20th century will be coming up with new metrics to accurately capture activity the increasingly digital economy

Source: Lecture, Erik Brynjolfsson

AIs as aesthetes

(More thorough 2018 reflection on AI and art here)

Creating aesthetic objects may not be a capacity strictly reserved for humans

Contemporary art techniques rely heavily on computation (for example, electronic music, digital art, collages and montages, etc.)

In fact, AI can liberate art from its dependence on technique and physical work

Experiencing art may not be a capacity strictly reserved to humans

If we construe aesthetic feelings like Imannual Kant did (who believed that pure aesthetic judgement should be void of feelings and emotion), then an aesthetic experience is not one which involves feelings

Instead, an aesthetic experience is reduced to the ability to identify, analyse, and point out certain structures of artwork. In such a case, AI can have an aesthetic experience

Proof: now there are art contests judged by AI (Huawei, 2018)

AI can have an aesthetic experience in an informational sense (in that it can manipulate & process information). A human’s aesthetic experience of an art piece is restricted — there’s a whole dimension to art that we don’t see because of our culture, experiences, & understanding as individuals.

Could AIs, as disinterested observers, appreciate art more universally and wholly than humans? Could they be better judges of aesthetic value as a result of the uninterrupted, real-time access they have to cultural and world knowledge?

Characteristics & impacts of foundation models

Learning: GPT4 uses a mechanism called self-supervised learning — the objective of system is simply to predict the next word; based on this training, really advanced capabilities have emerged over last 5 years. It seems that next-word prediction implies the existence of a world model, which leads to interesting questions around how human brain works

Bounds of function: It is difficult to accurately identify and benchmark the capabilities of LLMs — they demonstrate a significant capabilities overhang. Prompt engineering is the best way to get close at to identifying the bounds of performance.

Though it may be tempting to benchmark LLM capabilities against human intelligence, we should avoid doing this due to unpredictable and inconsistent gaps in knowledge and functioning (e.g., ChatGPT can write rhyme acrostic poetry, but has trouble counting the number of words in a sentence, hallucinations, etc.)

Two camps around LLMs:

1) Those who see AI as stochastic parrots or advanced autocompletes

2) Those who believe in human-level AI

It’s easy to both over or under-estimate these systems at the same time: they have a different capabilities surface in that their capabilities are just fundamentally different from ours: in some areas they may perform spectacularly, and in others may fail badly

Short term lessons:

They are better at generating content, we're better at discriminating content. We should focus on our comparative advantage

LLMs have the potential to significantly restructure the economy: the role of humans in many cognitive tasks will decline, and we will turn into rubber stampers

This creates category of cognitive automation

What's different from before?

Affects a new category of workers — cognitive workers

Produces outputs that are non-rivalrous — and therefore can be rolled out much faster

Chips away at our last advantage against machines — the cognitive advantage

Ideas

The more advanced tech gets, the less useful (and moral) it is to study it in isolation

“There’s an old principle that a computer should never ask a question if it should be able to work out the answer, and the more that computers become invisible parts of our lives the more that they ‘should’ be able to work out”

What would happen if you ship-of-theseused humans with silicon?

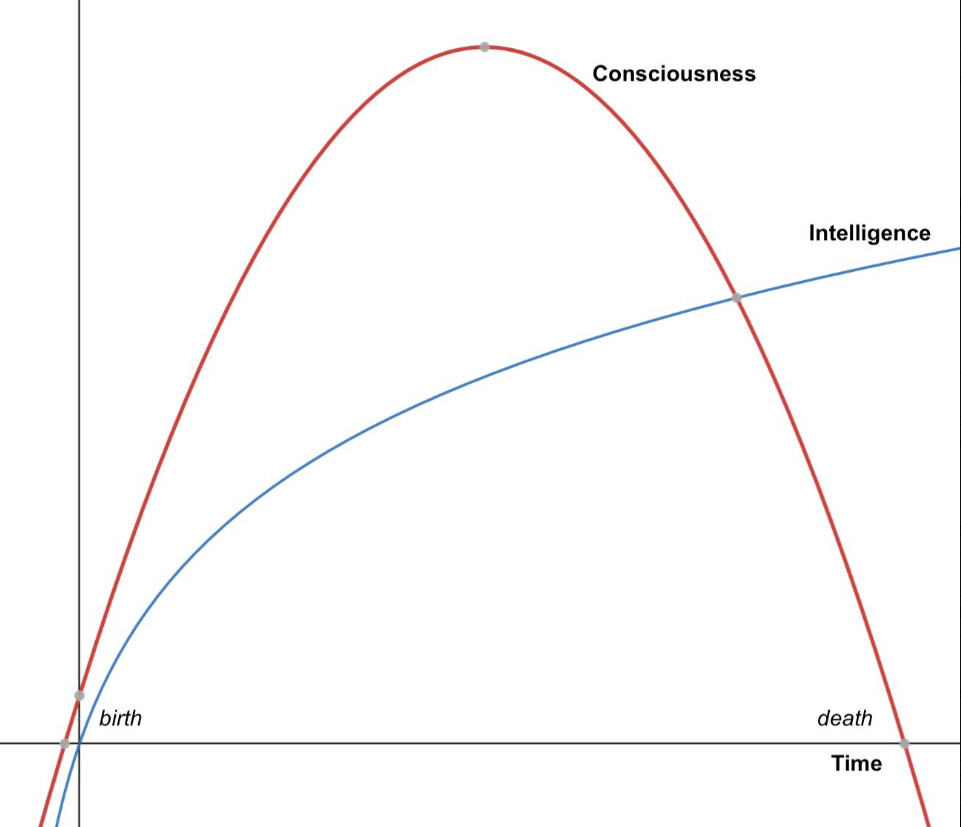

Human ageing is an unbundling of consciousness and intelligence with time. If we provide beings with an eventual capacity for rationality with moral status (babies), does that imply that the current gen of AIs should have moral status?